Training & Export

Requires an active connection to the ONE AI Cloud.

This page covers the full model lifecycle: creating a model instance, configuring and running training, evaluating results with testing, and exporting the model for deployment.

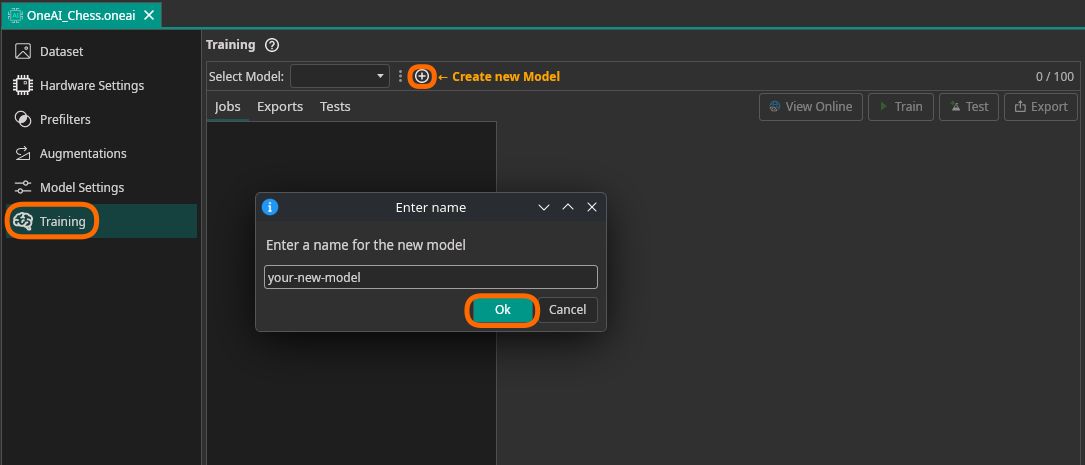

Creating a Model

Before training, you need to create a model instance. Click the + button in the Train tab to add a new model.

Training

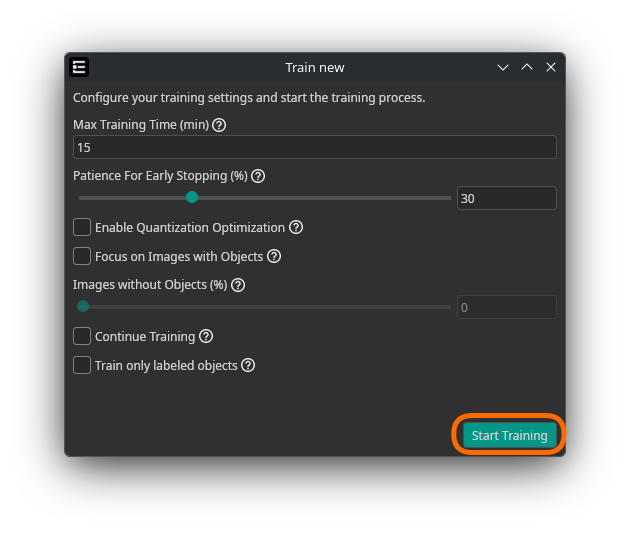

Select the target model, then click Train to open the training dialog.

Parameters

| Parameter | Description |

|---|---|

| Max Training Time (min) | Maximum training duration in minutes. Simpler tasks (e.g. basic classification) may need only 10 minutes; advanced tasks (e.g. object detection) could need 60 minutes or more. You can review results after initial training and extend as needed. |

| Patience for Early Stopping (advanced) | Percentage of training time without improvement before automatic stop. Recommended: 5–15%. Set equal to total training time to ensure fully trained models. |

| Enable Quantization Optimization | Trains a quantization-aware model. Helps the model adjust to quantized operations, improving final accuracy on quantized exports. May slow down training. Consider starting without it for faster initial evaluation. |

| Focus on Images with Objects | Trains only on images that contain annotated objects. Helps the model learn faster to detect objects and handle many different objects, but spends less time learning backgrounds. Only visible for Object Detection and Segmentation tasks. |

| Enrichment with Images without Objects (%) | When "Focus on Images with Objects" is enabled, this controls how many images without objects to include. 100% means equal numbers of images with and without objects. |

| Train only labeled objects (advanced) | Trains only on labeled objects, disregarding other parts of the image. Useful when images are not fully labeled — the model can then help with labeling or be further trained on more data. Only available for Object Detection. |

| Continue Training | If a previous training exists for this model, continue training from the last checkpoint instead of starting fresh. |

Validation Settings

Validation data is used during training to evaluate the model on unseen data and prevent overfitting.

| Parameter | Description |

|---|---|

| Use Validation Split | If you don't upload separate validation images or want to extend them, designate a portion of the training images as validation data. Make sure to use some form of validation (either upload images or use a validation split) for good results. |

| Validation Split (%) | Percentage of training images to use as validation. Recommended: 30% for small datasets, 20% for most datasets, 10% for large datasets. Set to 0% to exclusively use uploaded validation images. |

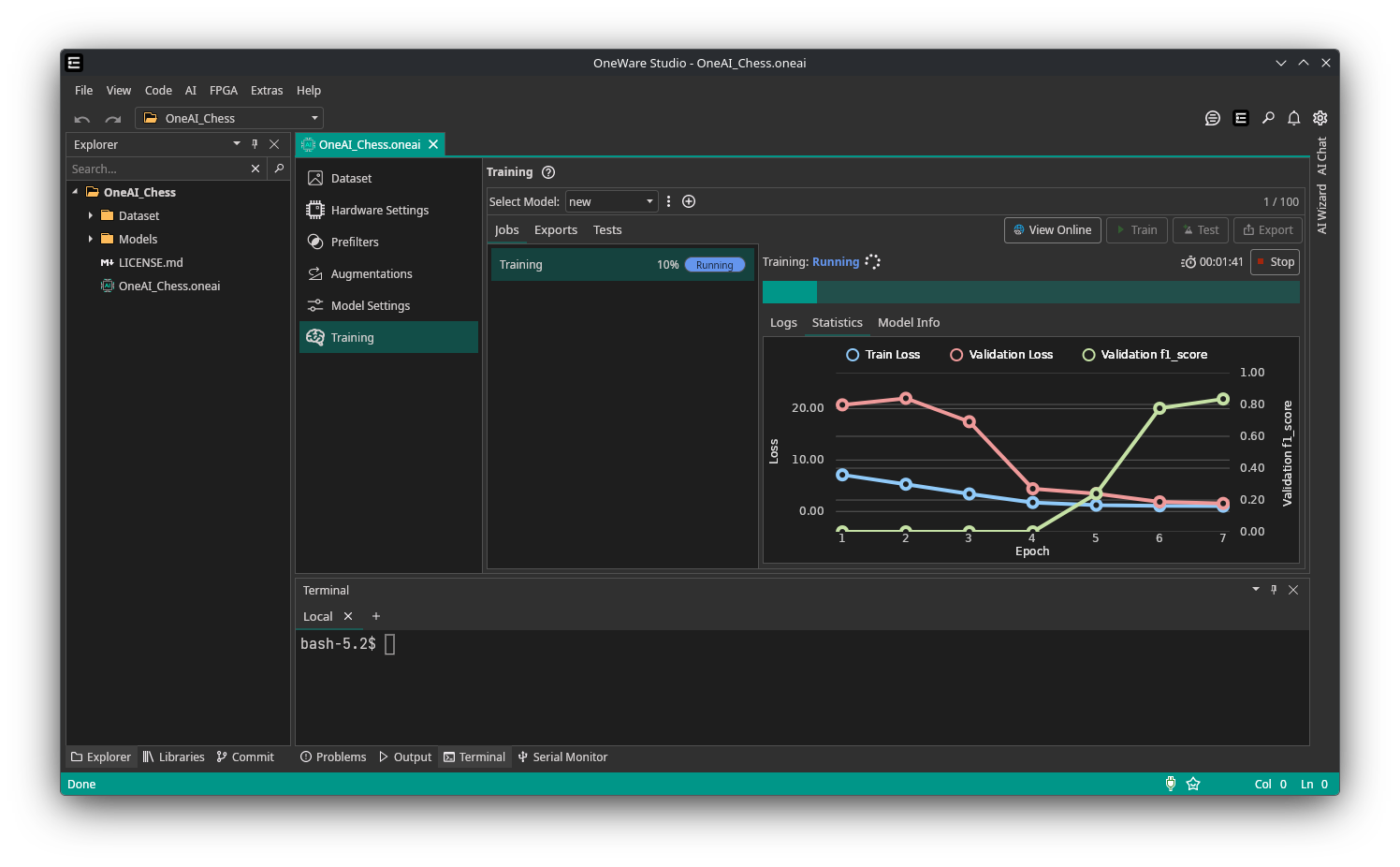

Cost & Progress

Credit cost is displayed before training starts. Early stopping may reduce actual cost below the estimate. Total time includes data upload and preprocessing overhead.

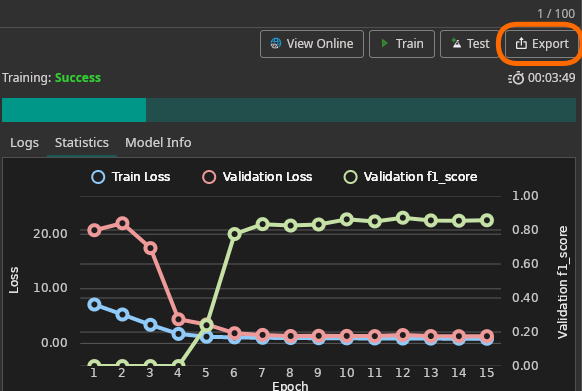

Training progress is visible in the Statistics tab. Training can be stopped manually at any time.

Testing

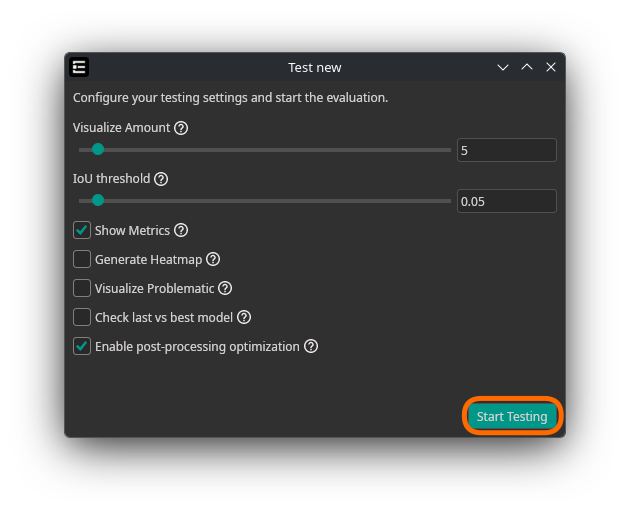

After training, you can evaluate your model's performance using the Test functionality. Testing runs the trained model against a set of images and provides metrics, visualizations, and diagnostics.

Data Sources

You can control which images are used for testing:

| Parameter | Default | Description |

|---|---|---|

| Train Image Percentage | 0% | Percentage of training images to include in testing. Useful when you have a limited dataset and want to see how the model performs on data it was trained on, or to get advanced metrics and visualizations. |

| Validation Image Percentage | 100% | Percentage of validation images to include in testing. Prioritize validation images to evaluate the model's ability to generalize to unseen data. |

| Validation/Test Split Percentage | 0% | Percentage of the validation dataset to reserve exclusively for testing (removed from validation during training). Use this with large datasets to ensure the model is evaluated on images it has never seen. Example: with a 30% validation split and 50% Validation/Test Split, 15% of the total dataset is used for testing and 15% for validation. |

Parameters

| Parameter | Description |

|---|---|

| Visualize Amount | Number of images to visualize during testing (1–100). Helps you quickly assess model performance on a subset of images. |

| IoU Threshold | Intersection over Union threshold for evaluating object detection performance (0.01–0.99). Lower values (e.g. 0.05) detect most objects even with imprecise bounding boxes. Higher values (e.g. 0.5) require precise localization. |

| Show Metrics (advanced) | Display detailed performance metrics after testing, including precision, recall, F1-score, and mAP. |

| Generate Heatmap (advanced) | Generate attention heatmaps that visualize where the model focused during detection. Helps understand the model's decision-making process and identify areas for improvement. |

| Visualize Problematic | Visualize images where the model struggled, such as false positives and false negatives. Guides further training or data collection efforts. |

| Check last vs best model (advanced) | Compares the last trained model with the best validation checkpoint. During training, two models are saved: the best validation model (without NMS post-processing) and the last trained model. This comparison determines which delivers better practical results with full post-processing. Useful for datasets prone to overfitting. |

| Enable post-processing optimization (advanced) | Test the model including pre- and postprocessing layers instead of only the trained neural network. Ensures consistent processing between training and inference and simplifies integration. |

Interpreting Results

After a test run completes, ONE WARE Studio displays:

- Performance metrics — precision, recall, F1-score, and mAP (when enabled)

- Visualized predictions — model outputs overlaid on test images

- Problematic images — false positives and false negatives highlighted for review

- Heatmaps — attention maps showing which image regions influenced predictions (when enabled)

Use these results to decide whether to deploy the model, adjust training settings, or collect additional training data.

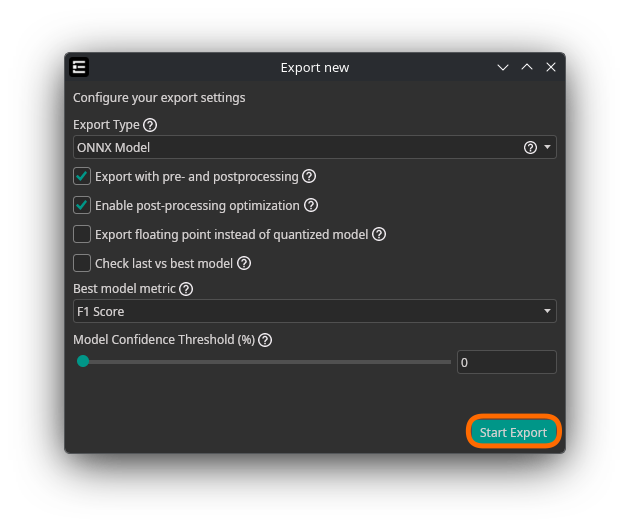

Export

Click Export to open the export dialog. Models can be exported as standalone files or embedded in software projects.

Parameters

| Parameter | Description |

|---|---|

| Export Type | Format and packaging for the trained model (see Export Types below) |

| Target | Target platform — currently Linux x64. Only visible for Executable and C++ Project exports. |

| Export with pre- and postprocessing | Includes preprocessing and output formatting layers in the model. Simplifies integration and ensures consistent processing between training and inference. |

| Enable post-processing optimization (advanced) | Applies optimized post-processing to the exported model. Only available when pre/postprocessing is enabled. |

| Export floating point instead of quantized model (advanced) | Ignores quantization-aware training and exports the full-precision model. Larger and slower but may improve accuracy for certain tasks. |

| Check last vs best model (advanced) | Compares the latest checkpoint against the best validation checkpoint (with full post-processing including NMS) to determine which model delivers better practical results. |

| Best model metric (advanced) | Metric used for the last-vs-best comparison: mAP, F1 Score, Precision, or Recall. Only active when "Check last vs best model" is enabled. |

| Model Confidence Threshold (%) (advanced) | Confidence threshold for model predictions. For object detection, only objects above this threshold are returned. For classification, all classes above the threshold are predicted as present. Set to 0 to let ONE AI estimate a suitable threshold. Note: this threshold cannot be decreased after export. |

Export Types

| Export Type | Description |

|---|---|

| ONNX Model | Export in ONNX format for compatibility with various frameworks. Recommended for testing in ONE WARE Studio, Auto Labeling, and the Camera Tool. |

| TFLite Model | Export in TensorFlow Lite format, optimized for mobile and embedded devices. |

| TensorFlow Model | Export in standard TensorFlow SavedModel format for servers or desktops. |

| FPGA (VHDL) | Export as VHDL code for FPGA implementation. Experimental — currently only Classification is supported. |

| Executable | Export as a standalone executable file. Experimental — best for testing purposes. |

| C++ Project | Export as a C++ project that can be extended for custom integration. |

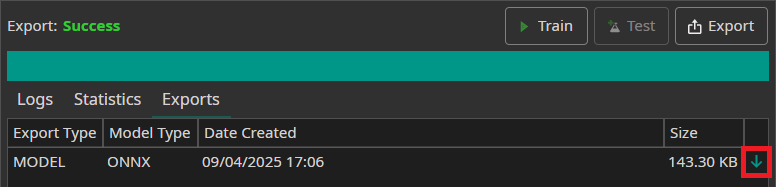

Downloading Exports

Completed exports appear in the Exports tab. Click the green arrow to download.

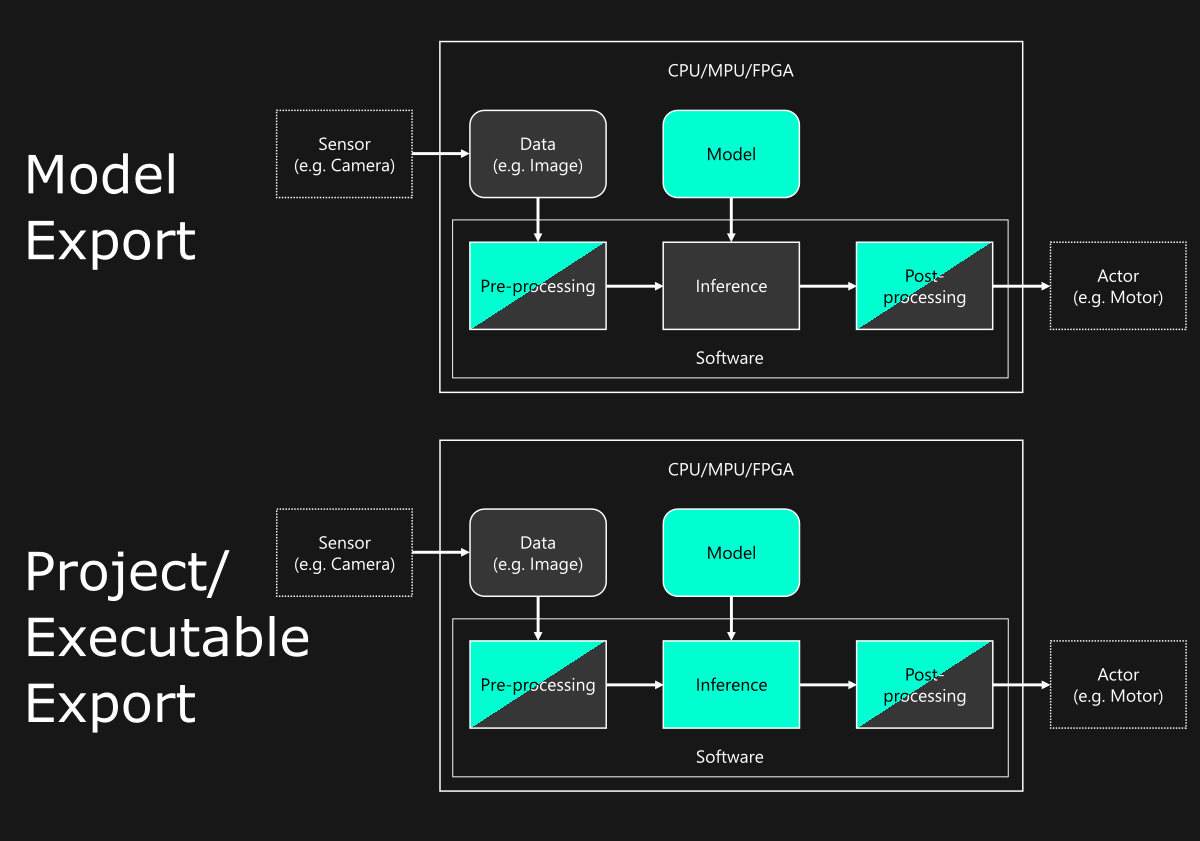

Integration

Model files require an inference engine matching the export format (e.g., TensorFlow Lite runtime or ONNX Runtime). The diagram below shows the integration toolchain — colored components are provided by ONE WARE.

For model I/O specifications (input tensors, output formats per task type), see Model I/O. For C++ project integration details, see the C++ API documentation.

Need Help? We're Here for You!

Christopher from our development team is ready to help with any questions about ONE AI usage, troubleshooting, or optimization. Don't hesitate to reach out!